Hin und wieder gibt es einfach Software, die Probleme erschreckend gut löst: Der Webserver Caddy – eine in Go geschriebene Plattform, die mit ihrem HTTP-Server alle Standardfälle im täglichen Betrieb abdeckt – ist ein gutes Beispiel dafür.

Install Caddy auf Ubuntu (Noble)

Die Installation ist hier dokumentiert, und ich lege noch einen DNS-Record an. Die Konfiguration in /etc/caddy/Caddyfile passe ich entsprechend an:

caddy1.mhein.net-dump.de {

root * /usr/share/caddy

file_server

}

Caddy verwendet in diesem Beispiel ein ‘configuration format for humans’, das einer menschlicheren Sprachsemantik folgt. Intern verwendet Caddy JSON für seine Konfiguration und entsprechende Adapter für andere Formate.

Durch die Angabe des Hosts und nach einem Neustart erhalte ich ein Let’s Encrypt Production-Zertifikat und ein SSLLabs ‘A’ Rating. Das bedeutet: TLS 1.3, TLS_AES_256_GCM_SHA384 oder TLS_CHACHA20_POLY1305_SHA256, OCSP stapling und eine valide Zertifikatskette.

Also in etwa das, was uns ein Mozilla SSL Configuration Generator vorschlagen würde, inklusive aller Redirects, die ich in der Produktion benötige. In der Dreifaltigkeit von Apache2, HAProxy und Nginx würde ich das nicht unter 100 Zeilen fehlerfreier Konfiguration erreichen.

Reverse Proxy

Ich lege einen neuen DNS-Record an, packe ein paar Security Header mit rein und passe meine Konfiguration folgendermaßen an:

caddy2.mhein.net-dump.de {

header {

Permissions-Policy interest-cohort=()

Strict-Transport-Security max-age=31536000;

Content-Security-Policy "upgrade-insecure-requests"

Content-Security-Policy: default-src 'self' caddy2.mhein.net-dump.de;

}

reverse_proxy 127.0.0.1:8080

}

Ich erhalte wieder Auto-TLS und einen perfekten Reverse Proxy. Durch korrekte Downstream-Header und WebSocket-Streaming als Standardeinstellung kann ich (nahezu) jede Applikation ohne Anpassung betreiben. Der HSTS-Header mit einer Dauer von einem Jahr verschafft mir jetzt ein ‘A+’ Rating und theoretisch die Erlaubnis, den Preload-Listen der Browserhersteller beizutreten.

PHP FastCGI Proxy

TL;DR – So schaut’s dann aus:

caddy3.mhein.net-dump.de {

root * /var/www/html

php_fastcgi localhost:9000

# php_fastcgi unix//run/php/phpfpm.sock

}

Das reicht dann z.B. schon aus, um ein WordPress oder Icinga Web dahinter zu betreiben.

Fazit

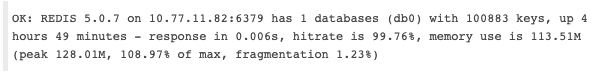

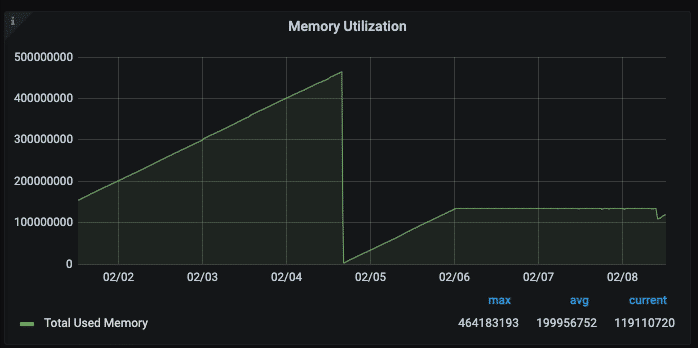

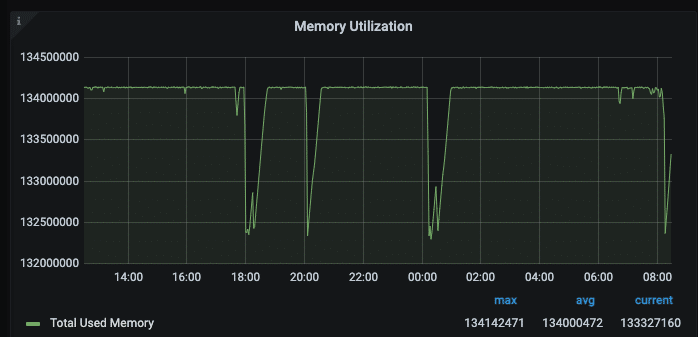

Ich muss gestehen, dass ich von der grandiosen Leichtigkeit sehr angetan bin. Für mich bedeutet das in der Praxis, dass ich mich mehr um die ‘Sonderfälle’ kümmern kann, anstatt um die Standards. Dadurch funktionieren komplizierte Web-Deployments im Team deutlich besser und Fehlerquellen werden reduziert. Viele der Features habe ich noch gar nicht erwähnt, z.B. das Clustern von Caddys und das Speichern der Zertifikate in Redis oder Consul für Ingress-Cluster, Prometheus-Exporter, Live-Reload, On-The-Fly-API etc.

Wir haben hier eine Vielzahl von Apaches, Nginxen und HAProxies im Einsatz. Jeder davon hat seine Berechtigung und funktioniert sehr gut. Daher freue ich mich über etwas Frische und neue Motivation, um in passenden Fällen Gutes noch besser bereitstellen zu können. Für jeden, der auch nur im Entferntesten etwas mit Webservern zu tun hat: Unbedingt antesten!