(c) https://xkcd.com/303/

We’re rewriting our network stack in Icinga 2.11 in order to to eliminate bugs with timeouts, connection problems, improve the overall performance. Last but not least, we want to use modern library code instead of many thousands of lines of custom written code. More details can be found in this GitHub issue.

From a developer’s point of view, we’ve evaluated different libraries and frameworks before deciding on a possible solution. Alex created several PoCs and already did a deep-dive into several Boost libraries and modern application programming. This really is a challenge for me, keeping up with the new standards and possibilities. Always learning, always improving, so I had a read on the weekend in „Boost C++ Application Development Cookbook – Second Edition„.

One of things which are quite resource consuming in Icinga 2 Core is multi threading with locks, waits and context switching. The more threads you spawn and manage, the more work needs to be done in the Kernel, especially on (embedded) hardware with a single CPU core. Jean already shared insights how Go solves this with Goroutines, now I am looking into Coroutines in C++.

Coroutine – what’s that?

Typically, a function in a thread runs, waits for locks, and later returns, freeing the locked resource. What if such a function could be suspended at certain points, and continue once there’s resources available again? The benefit would also be that wait times for locks are reduced.

Boost Coroutine as library provides this functionality. Whenever a function is suspended, its frame is put onto the stack. At a later point, it is then resumed. In the background, the Kernel is not needed for context switching as only stack pointers are stored. This is done with Boost’s Context library which uses hardware registers, and is not portable. Some architectures don’t support it yet (like Sparc).

Boost.Context is a foundational library that provides a sort of cooperative multitasking on a single thread. By providing an abstraction of the current execution state in the current thread, including the stack (with local variables) and stack pointer, all registers and CPU flags, and the instruction pointer, a execution context represents a specific point in the application’s execution path. This is useful for building higher-level abstractions, like coroutines, cooperative threads (userland threads) or an equivalent to C# keyword yield in C++.

callcc()/continuation provides the means to suspend the current execution path and to transfer execution control, thereby permitting another context to run on the current thread. This state full transfer mechanism enables a context to suspend execution from within nested functions and, later, to resume from where it was suspended. While the execution path represented by a continuation only runs on a single thread, it can be migrated to another thread at any given time.

A context switch between threads requires system calls (involving the OS kernel), which can cost more than thousand CPU cycles on x86 CPUs. By contrast, transferring control vias callcc()/continuation requires only few CPU cycles because it does not involve system calls as it is done within a single thread.

TL;DR – in the way we write our code, we can suspend function calls and free resources for other functions requiring it, without typical thread context switches enforced by the Kernel. A more deep-dive into Coroutines, await and concurrency can be found in this presentation and this blog post.

A simple Example

$ vim coroutine.cpp

#include <boost/coroutine/all.hpp>

#include <iostream>

using namespace boost::coroutines;

void coro(coroutine::push_type &yield)

{

std::cout << "[coro]: Helloooooooooo" << std::endl;

/* Suspend here, wait for resume. */

yield();

std::cout << "[coro]: Just awesome, this coroutine " << std::endl;

}

int main()

{

coroutine::pull_type resume{coro};

/* coro is called once, and returns here. */

std::cout << "[main]: ....... " << std::endl; //flush here

/* Now resume the coro. */

resume();

std::cout << "[main]: here at NETWAYS! :)" << std::endl;

}

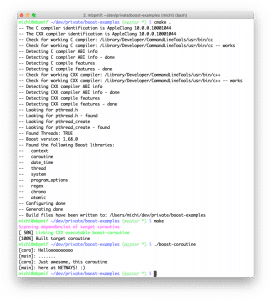

Build it

On macOS, you can install Boost like this, Linux and Windows require some more effort listed in the Icinga development docs). You’ll also need CMake and g++/clang as build tool.

brew install ccache boost cmake

Add the following CMakeLists.txt file into the same directory:

$ vim CMakeLists.txt

cmake_minimum_required(VERSION 2.8.8)

set(BOOST_MIN_VERSION "1.66.0")

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++0x")

find_package(Boost ${BOOST_MIN_VERSION} COMPONENTS context coroutine date_time thread system program_options regex REQUIRED)

# Boost.Coroutine2 (the successor of Boost.Coroutine)

# (1) doesn't even exist in old Boost versions and

# (2) isn't supported by ASIO, yet.

add_definitions(-DBOOST_COROUTINES_NO_DEPRECATION_WARNING)

link_directories(${Boost_LIBRARY_DIRS})

include_directories(${Boost_INCLUDE_DIRS})

set(base_DEPS ${CMAKE_DL_LIBS} ${Boost_LIBRARIES})

set(base_SOURCES

coroutine.cpp

)

add_executable(coroutine

${base_SOURCES}

)

target_link_libraries(coroutine ${base_DEPS})

set_target_properties(

coroutine PROPERTIES

FOLDER Bin

OUTPUT_NAME boost-coroutine

)

Next, run CMake to check for the requirements and invoke make to build the project afters.

cmake . make

Run and understand the program

$ ./boost-coroutine [coro]: Helloooooooooo [main]: ....... [coro]: Just awesome, this coroutine [main]: here at NETWAYS! :)

void coro(coroutine::push_type &yield)

Up until „yield()“, the function logs the first line to stdout.

The first call happens inside the „main()“ function, by specifying the pull_type and directly calling the function as coroutine. The pull_type called „resume()“ (free form naming!) must then be explicitly invoked in order to resume the coroutine.

coroutine::pull_type resume{coro};

After the first line is logged from the coroutine, it stops before „yield()“. The main function logs the second line.

[coro]: Helloooooooooo [main]: .......

Now comes the fun part – let’s resume the coroutine. It doesn’t start again, but the function’s progress is stored as stack pointer, targeting „yield()“. Exactly this resume function is called with „resume()“.

/* Now resume the coro. */

resume();

That being said, there’s more to log inside the coroutine.

[coro]: Just awesome, this coroutine

After that, it reaches the end and returns to the main function. That one logs the last line and terminates.

[main]: here at NETWAYS! :)

Without a coroutine, such synchronisation between functions and threads would need waits, condition variables and lock guards.

Icinga and Coroutines

With Boost ASIO, the spawn() method wraps coroutines on a higher level and hides the strand required. This is used in the current code and binds a function into its scope. We’re using lambda functions available with C++11 in most locations.

The following example implements the server side of our API waiting for new connections. An endless loop listens for incoming connections with „server->async_accept()“.

Then comes the tricky part:

- 2.9 and before spawned a thread for each connection. Lots of threads, context switches and memory leaks with stalled connections.

- 2.10 implemented a thread pool, managing the resources. Handling the client including asynchronous TLS handshakes are slower, and still many context switches ahead between multiple connections until everything stalls.

- 2.11 spawns a coroutine which handles the client connection. The yield_context is required to suspend/resume the function inside.

void ApiListener::ListenerCoroutineProc(boost::asio::yield_context yc, const std::shared_ptr& server, const std::shared_ptr& sslContext)

{

namespace asio = boost::asio;

auto& io (server->get_io_service());

for (;;) {

try {

auto sslConn (std::make_shared(io, *sslContext));

server->async_accept(sslConn->lowest_layer(), yc);

asio::spawn(io, [this, sslConn](asio::yield_context yc) { NewClientHandler(yc, sslConn, String(), RoleServer); });

} catch (const std::exception& ex) {

Log(LogCritical, "ApiListener")

<< "Cannot accept new connection: " << DiagnosticInformation(ex, false);

}

}

}

The client handling is done in „NewClientHandlerInternal()“ which follows this flow:

- Asynchronous TLS handshake using the yield context (Boost does context switches for us), Boost ASIO internally suspends functions.

- TLS Shutdown if needed (again, yield_context handled by Boost ASIO)

- JSON-RPC client

- Send hello message (and use the context for coroutines)

And again, this is the IOBoundWork done here. For the more CPU hungry tasks, we’re using a different CpuBoundWork pool which again spawns another coroutine. For JSON-RPC clients this mainly affects syncing runtime objects, config files and the replay logs.

Generally speaking, we’ve replaced our custom thread pool and message queues for IO handling with the power of Boost ASIO, Coroutines and Context thus far.

What’s next?

After finalizing the implementation, testing and benchmarks are on the schedule – snapshot packages are already available for you at packages.icinga.com. Coroutines will certainly help embedded devices with low CPU power to run even faster with many network connections.

Boost ASIO is not yet compatible with Coroutine2. Once it is, the next shift in modernizing our code is planned. Up until then, there are more Boost features available with the move from 1.53 to 1.66. Our developers are hard at work with implementing bug fixes, features and learning all the good things.

There’s many cool things under the hood with Icinga 2 Core. If you want to learn more and become a future maintainer, join our adventure! 🙂